Desktop Assessment and Feedback Utilities

The adoption of Canvas as the Virtual Learning Environment at Queen’s has brought many benefits for assessment and feedback. Student submissions can be annotated with feedback comments and marking rubrics can be established to guide student efforts and speed up the grading and feedback process. This allows students to receive detailed and bespoke feedback while a consistent marking schema is applied.

However, these are instances where grading of submissions within Canvas may not be possible, for example, when working offline. Submissions uploaded in certain formats (e.g. Excel, coding, or zip files) must also be downloaded and evaluated outside of Canvas.

Regardless of the approach, it is fairly inevitable that similar issues will start to appear across student submissions. Feedback provided for one student may be equally valuable for another. For electronic grading of work, this requires markers to retype comments on multiple occasions. A further challenge when manually grading work unaided, is to ensure consistency, that is, students receiving similar feedback also receive similar marks.

To streamline the process of generating high quality feedback to students, I have developed a number of marking companion tools. Originally developed in Excel and VBA, they have now been converted into desktop applications developed using python and tkinter.

All source code is available on GitHub but this has also been compiled into executables that can be downloaded from the project homepage and run without the need for a python installation. Documentation is also provided but a summary of the applications and their key features is provided below.

PyGrade

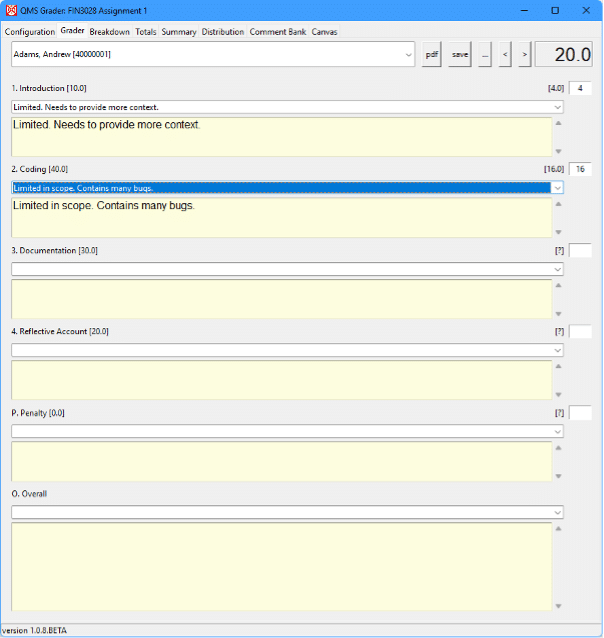

This utility is for assigning marks and providing feedback comments. Some initial (text file) configuration is required to establish student details (i.e. student id and name), grading components (i.e. question descriptor and available marks), and rubric (descriptors and marks).

To grade a question, feedback comments can be selected from a drop down and/or manually keyed. Marks will automatically be assigned for pre-existing feedback comments. As marking progresses, all previously entered comments are available for selection.

Key features are:

- Dropdown class list

- Configurable number of questions

- Selectable feedback comments

- Automatic aggregation

- PDF feedback reports

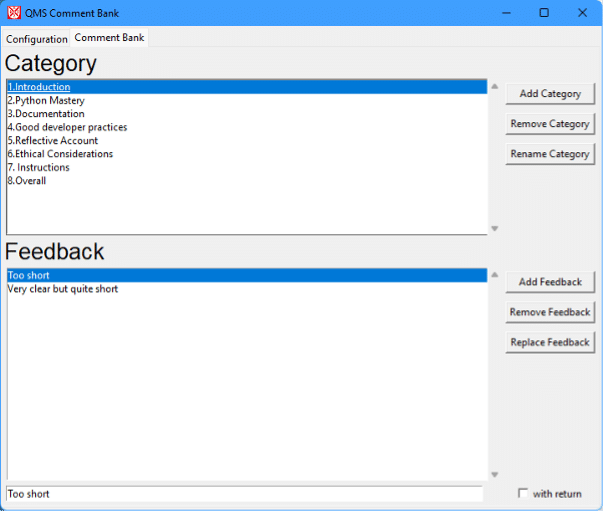

PyCommentBank

This simple utility maintains a collection of feedback comments grouped by category. By clicking once to select a comment, it is automatically added to the clipboard to be pasted as an annotation into the student work.

When marking, assessors can add recurring feedback comments to the comment bank which can then be easily selected for immediate annotation of student work. Comment banks can, of course, be reused for similar assignments or for future student cohorts.

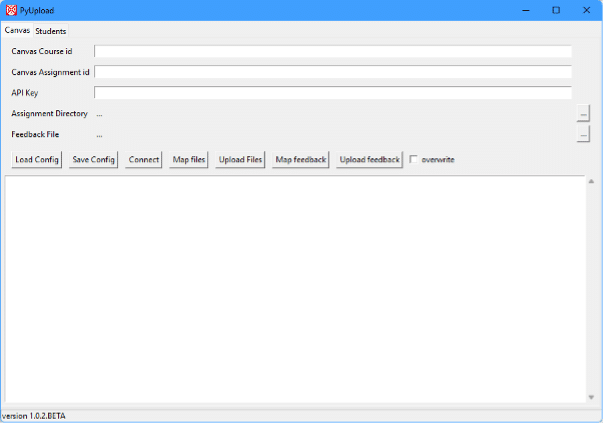

PyUpload

This utility allows (any combination of) student marks, summary comments, and graded work to be uploaded in bulk to Canvas. To use this feature, you must first generate an API key within Canvas to provide the necessary permissions to make updates to your Canvas module. Any files to be uploaded must have a file name that contains the student id of the intended recipient.

All the applications have been developed based on my module assessment and personal practice. Please get in touch if you have any suggestions for feature enhancements.

Alan Hanna

Queen’s Management School

a.hanna@qub.ac.uk